Learning Visual Representation from Audible Interactions

Abstract

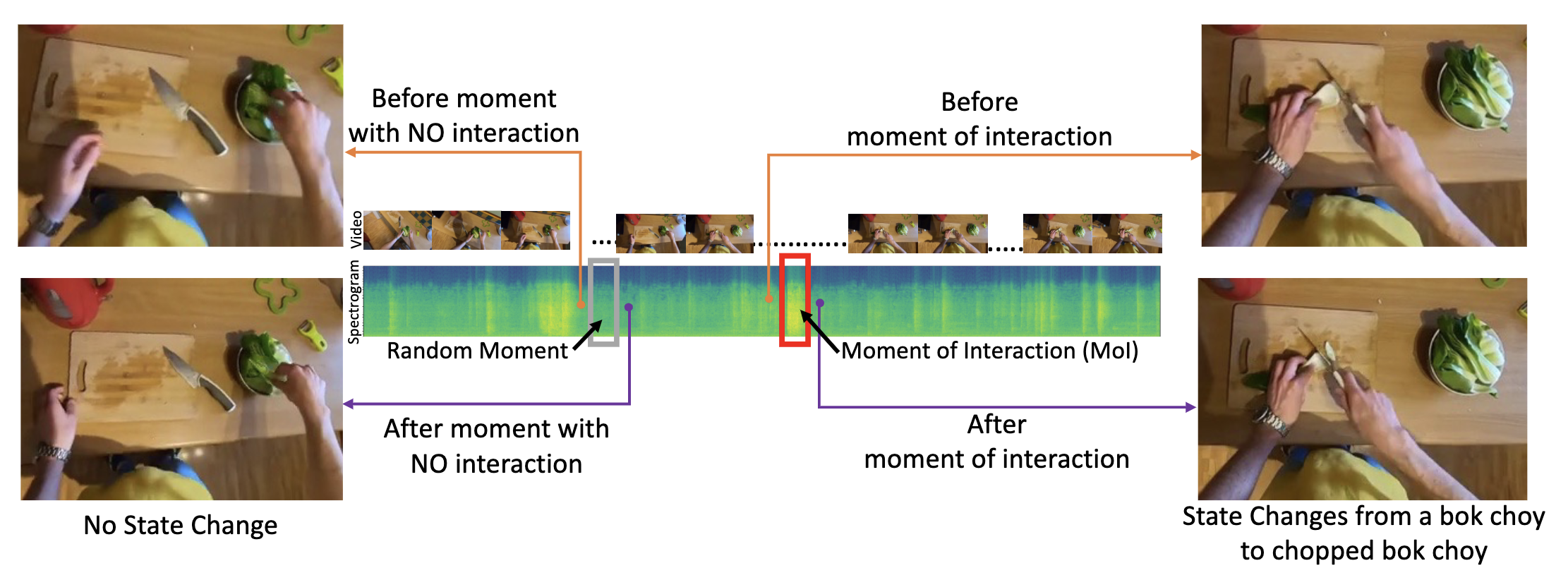

We propose a self-supervised algorithm to learn representations from egocentric video data. Recently, significant efforts have been made to capture humans interacting with their own environments as they go about their daily activities. In result, several large egocentric datasets of interaction-rich multi-modal data have emerged. However, learning representations from videos can be challenging. First, given the uncurated nature of long-form continuous videos, learning effective representations require focusing on moments in time when interactions take place. Second, visual representations of daily activities should be sensitive to changes in the state of the environment. However, current successful multi-modal learning frameworks encourage representation invariance over time. To address these challenges, we leverage audio signals to identify moments of likely interactions which are conducive to better learning. We also propose a novel self-supervised objective that learns from audible state changes caused by interactions. We validate these contributions extensively on two large-scale egocentric datasets, Epic-Kitchens and the recently released Ego4D, and show improvements on several downstream tasks, including action recognition, long-term action anticipation, and object state change classification.

Published at: Neural Information Processing Systems (NeurIPS), New Orleans, 2022.

Bibtex

@InProceedings{mittal2022_replai,

title={Learning Visual Representation from Audible Interactions},

author={Himangi Mittal, Pedro Morgado, Unnat Jain, Abhinav Gupta},

booktitle={Advances in Neural Information Processing Systems (NeurIPS)},

year={2022}

}